Quantum vs. cloud computing: Key differences?

Quantum and cloud computing are the future of computer operations. Here’s what they are, their applications, and their main differences.

Will quantum computing replace cloud computing?

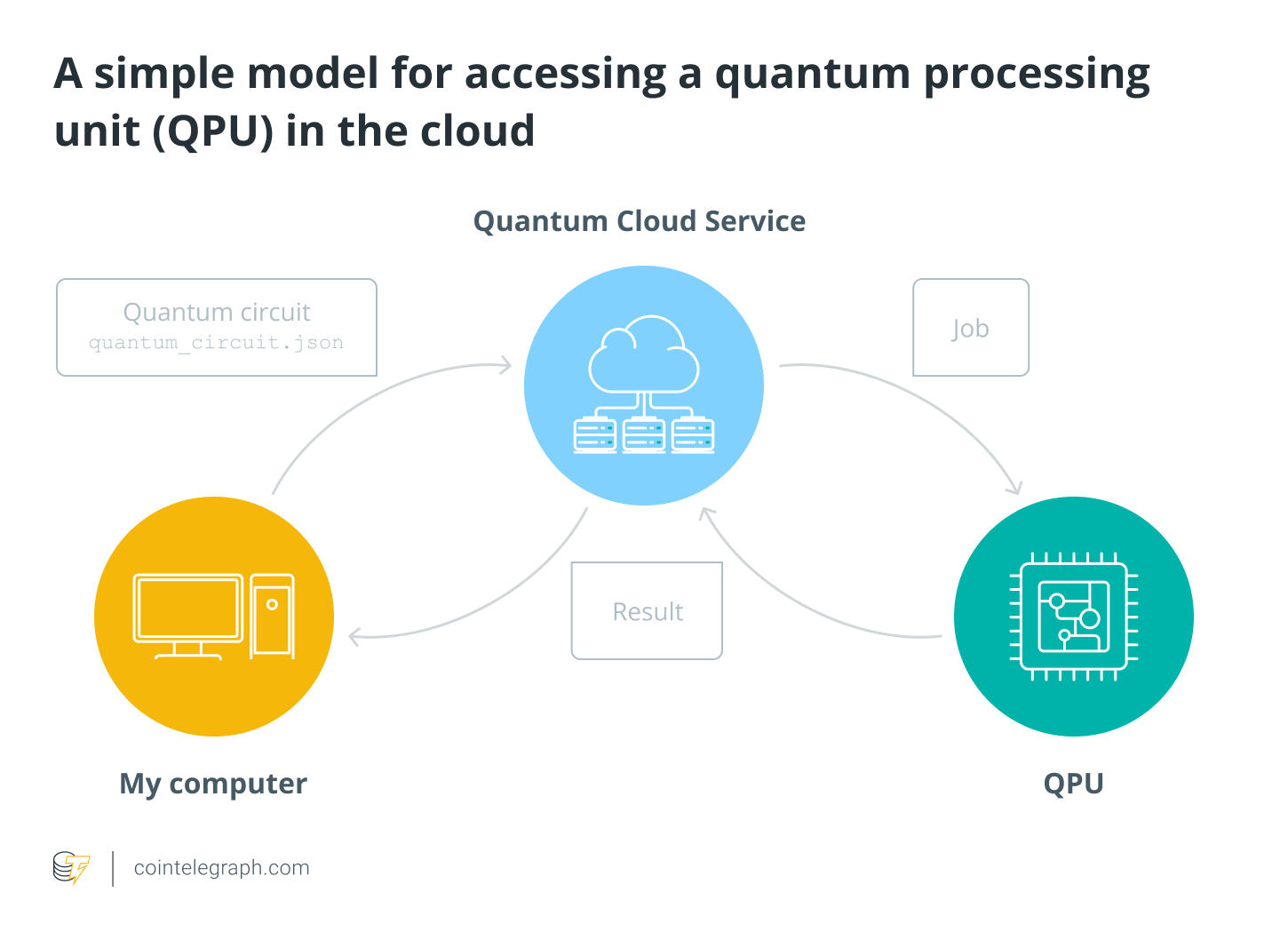

A new trend is emerging, with cloud-based quantum computing combining both technologies and their advantages and providing direct access to quantum computers via the web using the cloud.

While quantum computing will not replace the cloud anytime soon, Big Tech companies are working on integrating the two solutions to get the most out of both worlds. Such integration can facilitate remote access to quantum computers using the cloud and be available to a broader range of users.

Cloud-based quantum computers can accelerate the pace of tech innovation, streamlining the work of researchers and developers who could access quantum hardware and computational resources via the cloud, leading to breakthroughs and discoveries faster.

Businesses and individuals must overcome numerous challenges before being ready to integrate quantum and cloud computing. For instance, the complexity of accessing quantum computers and the expertise required to handle them make it challenging for the average person to use quantum solutions in the cloud. Another concern is security, as sensitive quantum algorithms must be safeguarded from unauthorized access or tampering, which is more prevalent in the cloud.

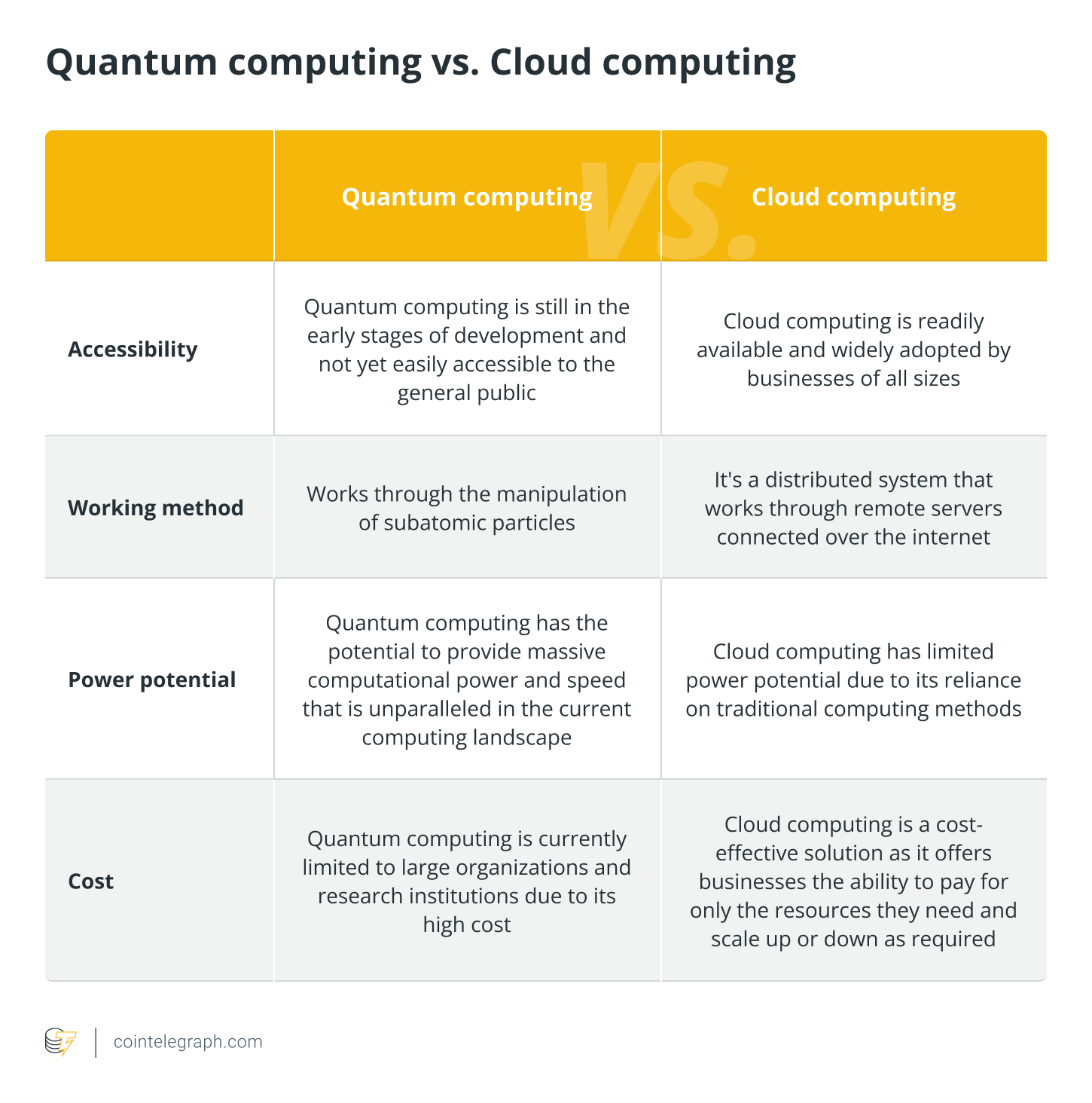

Quantum vs. cloud computing: Which is better?

Both solutions have benefits and drawbacks, and there is no winner between quantum and cloud computing. Eventually, they will be fully integrated to provide compelling and secure solutions for all kinds of businesses and individuals.

Before choosing between quantum and cloud computing, businesses must consider several factors such as cost, availability and specific requirements.

How does cloud computing work?

Cloud computing is hosted by specialized companies that maintain massive data centers to provide the necessary security, storage capacity and computing power for the support of the online infrastructure.

Cloud services are accessible via an internet connection and computing devices such as smartphones, laptops or desktop computers. Users choose a cloud computing hosting company and pay for the rights to use their services. Such services include the infrastructure needed to facilitate communication between devices and programs, such as downloading a file on a user’s laptop that would be instantly synced on the same user’s iPhone file folder.

Cloud computing has a front end that enables users to access their stored data with an internet browser and a backend made of servers, computers, databases and central servers.

The central servers use specific protocols’ rules to facilitate operations and ensure smooth communication between the cloud-linked devices.

Some of the available cloud hosting companies are foremost tech leaders such as Amazon Web Services, Microsoft Azure, Apple iCloud and Google Drive.

Advantages and disadvantages of cloud computing

Advantages:

- Cloud computing allows businesses to scale their infrastructure on demand, which means they can quickly and easily add or remove servers as their needs change. This can help businesses handle sudden market demands without worrying about capacity constraints.

- Cost-effectiveness for businesses as they do not need to invest in expensive hardware or software installations.

- Cloud computing provides easy accessibility to data and applications from anywhere, as long as there is an internet connection.

Disadvantages:

- Security is still a concern for cloud computing due to its reliability on an internet connection, which can be vulnerable to hacking attacks.

- The high centralization of cloud servers means that services may go offline in specific locations during outages; even censorship resistance is compromised with centralized providers.

How does quantum computing work?

While a classical processor uses bits to process operations and conduct various programs, a quantum computer uses qubits to run multidimensional quantum algorithms.

Quantum computers utilize a variety of multidimensional algorithms to perform measurements and observations through qubits, which can represent 0 and 1 simultaneously. The processing power of such multidimensional spaces increases exponentially in proportion to the number of qubits added.

Quantum computers are smaller and require less energy than supercomputers, computers with a high level of performance as compared to general-purpose computers. A quantum processor is similar to the size of a laptop processor, while a quantum hardware system is made up mostly of cooling systems.

Quantum computers are sensitive tools with high error rates, which are prevented by keeping the hardware at a very cold temperature, about a hundredth of a degree above absolute zero. Such cooling systems are defined as superfluids that must be able to extra-cool down the processors to create superconductors.

Here, electrons can move through without resistance, generating quantum information more quickly and efficiently and creating complex multidimensional spaces proportional to the number of qubits added.

Advantages and disadvantages of quantum computing

Advantages:

- Opportunities for several industries to develop and design advanced computer programs based on highly accurate, safe and efficient data

- Enhanced and unbreakable data encryption methods for better fraud detection and general security of sensitive data

- Unprecedented data processing speed to manage vast amounts of data at once, which is impossible on conventional computers

- Quantum computing can help in the development of new materials, medicines and chemicals by simulating complex molecular structures.

Disadvantages:

- Quantum computers are highly sensitive to external interference, such as temperature and electromagnetic radiation, which can affect the accuracy of the calculations.

- Scarce availability and consequent lack of mass adoption don’t allow developers to assess quantum computers’ features and reliability properly

- The requirement for a large amount of data to function correctly means businesses must invest in enormous data storage systems to accommodate quantum computers.

What is cloud computing?

Cloud computing — or “the cloud” — is an application-based software that distributes computing services throughout the internet, utilizing third-party servers, storage, databases, networking, software, analytics and intelligence to store and process data.

Before cloud computing, businesses had to buy and maintain their own servers containing enough space to prevent downtime and outages and manage peak traffic volume. Still, a lot of server space often went unused, wasting money and resources. The cloud computing ecosystem allows organizations to employ a more efficient and cost-effective solution without requiring expensive hardware, private data centers or software installation, focusing on innovation, dynamic resources and economies of scale.

Cloud computing was invented in the 1960s but became more predominant in the 2020s when a more productive computing system was required to handle the challenges of organizations’ remote working that emerged during the pandemic.

Thanks to cloud computing, many businesses can share services instantaneously at any time, other than managing, accessing, and storing data and applications remotely. Using the cloud, they benefit from scalable storage for files, applications and different types of information, saving time and money.

What is quantum computing?

Quantum computing is a development of quantum mechanics, a discipline that covers the mathematical description of the properties of nature at the level of atomic and subatomic particles, such as electrons or photons.

Quantum computing utilizes subatomic particles to turn computers into robust machines that can calculate and process data at a breakneck speed. They achieve extreme speed thanks to qubits (quantum bits) and their ability to exist simultaneously in one and zero states or any linear combination of the two. In contrast, conventional computers’ binary systems exist only with one (“on” or “true”) or zero (“off” or “false”) states. Qubits allow these particles to exist in multiple states simultaneously.

In other words, such an ability to live in both one and zero states at once enables quantum computers to process vast amounts of data simultaneously. This is impossible in binary-based computer systems that can only process one piece of information at a time.

Quantum computing emerged back in the 1980s when physicists Richard Feynman and Yuri Manin discovered that quantum theory and algorithms could be applied to computing with more efficient results than their classical counterparts.

Applied quantum computing is still in its infancy but will impact many industries, especially helping large organizations deal with enormous amounts of data faster and more efficiently.

Due to their ability to solve highly complex problems, such as processing huge amounts of data superfast or providing better prediction models in various fields, quantum computers could break existing encryption protocols, posing a threat to blockchain technology and cryptocurrencies.

Go to Source

Author: Emi Lacapra